Some Kafka Advice

I would like to share some advice when using Apache Kafka and its ecosystem in this article. All these recommendations came from my journey using Apache Kafka and it’s definitively not an exhaustive list.

Topic Management

A Topic is one of the most important elements in Apache Kafka architecture. Here are some tips to help you design and create your topics.

Topic Design

Topic Naming

Topic naming is a critical part of architecting your system. By just seeing the name of the topic you can get a lot of information from it and once your platform starts to grow, processing a lot of exchanges, having a good topic naming convention is important. Here are some keys to help you set up a topic naming convention.

- do not start the topic name with

_. Topics like__consumer_offsets,_schemasor_connect_configare internal topics. - Apache Kafka 2.8 introduces a new internal topic named

@metadata. I would recommend not naming a topic starting with@. - Add the visibility of your topic. Is the topic shared with other applications or it’s a topic only used by your application? It will also help you to manage the access and the ACL to those topics.

- Add the event way, in or out. Are you simply producing to this topic, consuming, or both?

- Add the event type. What kind of data will go to this topic?

- Add the data domain. Like in DDD, data is related to a particular context and it can be useful to add it with the event type.

Finally, you could set up a template for topic naming and ensure that all topics respect this format. For example:

<private/public>_<in/out>_<data domain>_<event type>

Number of partitions

Before choosing the number of partitions for a topic, you should think about the partitioning key. Have in mind that two events produced with the same key will go to the same partition and the order is preserved by partition and not by topic! I would recommend choosing a business key that balances the events across all the partitions and preserving the event order. Using a technical key or a random key like an UUID is not relevant as you can simply produce messages using a null key and let the DefaultPartitioner balance the messages with the sticky partitioner.

The number of partitions is the limit of scalability. It limits parallel processing for a consumer group. I would recommend choosing a highly divisible number. For example 12 or 30. With 12 partitions, you can have:

- 1 consumer instance that deals with 12 partitions

- 2 consumer instances that deal with 6 partitions each

- 3 consumer instances that deal with 4 partitions each

- 4 consumer instances that deal with 3 partitions each

- 6 consumer instances that deal with 2 partitions each

- 12 consumer instances that deal with 1 partition each

Depending on the current load, it’s easy to scale up or down the consumer groups based on seasonality. Picking a high number of partitions will help you parallelize your processing and impact the cluster performance. An important number of partitions increases the number of leaders, replicas, and replication processes. It can lead to performance issues in case of recovery. There is no limit to the number of partitions in a cluster for the moment but the current recommendation is:

The current generic recommendation to have no more than 4000 partitions per broker and no more than 200000 partitions per cluster is not enforced by Kafka. https://cwiki.apache.org/confluence/display/KAFKA/KIP-578%3A+Add+configuration+to+limit+number+of+partitions.

By the way, this recommendation is likely to change in the future with the new Apache Kafka Zookeeper-less version.

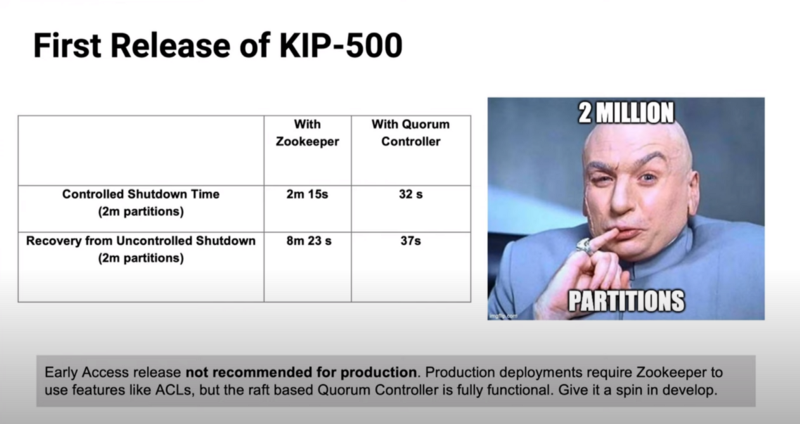

As you can see from this picture extracted from this YouTube video, An uncontrolled shutdown with 2 million partitions only takes 37 seconds to recover with Apache Kafka 2.8 instead of 8 minutes with an older Apache Kafka.

Finally, keep in mind that you can only increase the number of partitions for a topic. You can not shrink it. Increasing the number of partitions can be done at runtime without broker restart or client restart, but it impacts the partitioning strategy and also the consumers.

Topic Creation

First of all, you should not let topic auto-creation on your production cluster. To avoid some potential issue, simply disable the topic auto-creation in your cluster by setting the parameter auto.create.topics.enable to false in the server.properties file.

Do not use GUI tools to create your topics. All this process should be automatized and easily reproducible using tools like Ansible or Terraform. It has to be versioned too.

However, you can let the auto-creation topic on your non-production cluster for testing and development.

Schema Management

Within the Kafka ecosystem, it is easy to exchange structured data with the Schema Registry component. There are several Schema Registry available on the market such as:

Working with structured data using schemas helps guard against side effects as data evolves.

I always recommend going Contract First! Create the schema, discuss it with the affected teams, conduct a code review during the evolution of the schema. Reviewing changes can and should be done not only by technical people but also by business people. These exchanges around the evolution of data may require an effort to synchronize teams, especially if the data impacts a large number of teams, but today the teams are agile and know how to welcome change ;-).

Producer Configuration

Compression

By default, you should enable producer compression for several reasons. It reduces the latency and size required to send data to Kafka, and so it reduces the bandwidth. It also reduces the size of data stored on the disk. Enabling compression does not need extra change to your consumers nor the brokers. However, one’s compression drawback is to increase CPU usage for the clients.

You can choose between four compression types: gzip, snappy, lz4 and zstd. The snappy compression type gives a good balance between CPU usage and compression ratio. Based on my experience, it’s the most used compression type.

Be careful as you can configure the compression type on broker configuration and on topic configuration.

If those configurations are different from the producer compression configuration, the broker will have to decompress and then compress the incoming messages. You should let the default topic and broker configuration to the producer value.

Favour consistency

By default, a producer is configured to minimize latency. It means as soon as a message is produced, it should be available to the consumers. Before dealing with some performance issues, I always go for consistency and so I used to configure the Kafka producer with those parameters:

enable.idempotence=true. By enabling this property, it will prevent you from having duplicate messages and out-of-order messages.acks=all.

This means the leader will wait for the full set of in-sync replicas to acknowledge the record. This guarantees that the record will not be lost as long as at least one in-sync replica remains alive. This is the strongest available guarantee.

The configuration acks is related to the topic configuration min.insync.replicas.

In fact, with the newest release of Apache Kafka 3.0, Producer will enable the strongest delivery guarantee by default as described in the KIP-679;

Tracing

When creating a Kafka producer, a best practice is to set the client.id.

This property is also relevant when creating a Kafka consumer.

An id string to pass to the server when making requests. The purpose of this is to be able to track the source of requests beyond just IP/port by allowing a logical application name to be included in server-side request logging.

If you want to learn more about tracing and client interceptor, I already wrote an article showing how to use Client Interceptor and OpenTracing.